|

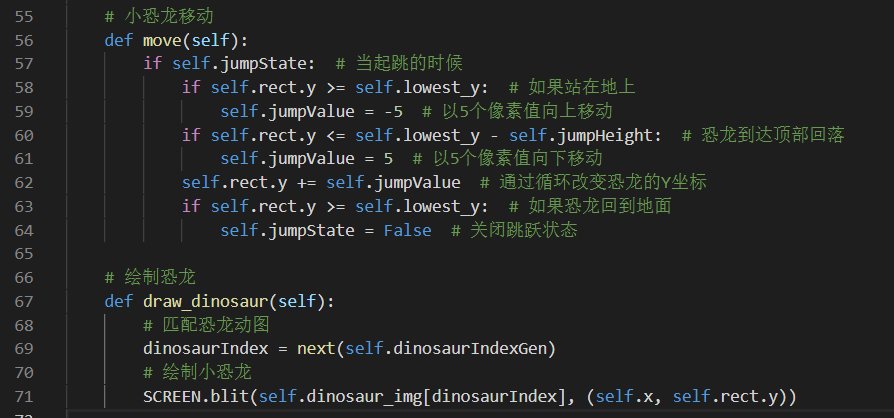

The more we add to the image and modifying it, the more messy our code is getting. It sets the colorkey to the background color, making it invisible. If you are just looking to load an image in pygame so it has an alpha channel, this is how to do so: self.image = ("image file path").convert_alpha() When you draw this image on the screen, it should include the transparency.īy adding this line after loading the image, you can make the pink background transparent. That's why the black rectangle in the middle disappear. a framework that was GPU accelerated (instead of pygame which is over SDL 1.BLUE = (0, 0, 255, 255) (my_image, BLUE, my_image.get_rect(), 10) Unlike the other Surfaces, this Surface default color won't be black but transparent.Of course all this may be moot because if you really cared about performance, you would use Pygame can't tell whether you need alpha surfaces or not, so you need to specify yourself. If you need transparency, you can use convert_alpha(), which is marginally faster than unconverted but not nearly as fast as convert() - alpha blending is expensive. If all surfaces matched the display format by default, then every time you try to blit semi-transparent surfaces, or ones loaded from PNGs or GIFs with transparent pixels, you end up with images over a black rectangle. If you do some image processing at low bit depths, you might get results that you weren't expecting.Īnother issue is that your display format most likely doesn't have alpha. The user might specify 16-bit pixels and it might end up as some wacky format like BGR565. I don't know the considerations that went into this design, but one issue is that you don't know until run time what your display pixel format will be.

Why don't surfaces match the display by default? Good question, as it's an obvious newbie trap. The recommendation is to do it as early as possible, preferably when you load or create your assets, or at least outside your game loop. You may not feel the difference on your octa-core development PC, but when you're on constrained devices like handhelds (or just bring up your CPU counter), it makes a big difference. If you don't call it, then every time you blit a surface to your display surface, a pixel conversion will be needed - this is a per pixel operation, very slow - instead of a series of memory copies.

convert() usage are highly appreciated, thanks!Ĭonvert() is used to convert the pygame.Surface to the same pixel format as the one you use for final display, the same one created from _mode(). fill(), before, or it doesn't matter? Is once enough or should I "reconvert" after each draw? What if I load an image? Why isn't the so called "fastest pixel format" the default when creating new surfaces?Īnd if such surfaces would benefit from a convert, when should I do so? After a. convert() when creating any surface? Like surface = pygame.Surface(.).convert()Ī lot of pygame tutorials do that, even for a background that is just. Would any of these surfaces benefit from a. They are blitted a lot, as source or dest. It has 3 surfaces: screen, background and ball. Screen = _mode((800, 600))īackground = pygame.Surface(screen.get_size()) It is a good idea to convert all Surfaces before they are blitted many times".Ĭonsider this simple ball-bouncing code: import pygame

Official documentation is very vague about it: it says "fastest format for blitting. It's common sense that I should convert a surface after loading an image to it (presumably a jpg/png/etc file), but what about surfaces that I only use pygame's "primitives" like () or Surface.fill()? I'm a bit puzzled about pygame's nvert():

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed